When Enterprise Defaults Become Enterprise Debt

Note on examples: The scenarios below are anonymized composites. They’re not a critique of any one organization; they’re patterns that repeat across industries. The goal isn’t to “modernize for fun.” It’s to protect speed-to-market and reliability as systems and organizations scale.

Why this matters

Most enterprises don’t lose because they picked the “wrong” framework or cloud provider. They lose because old defaults - once rational - become invisible policy.

The 90s and early 2000s optimized for constraints that were real at the time:

- hardware was expensive

- automation was immature

- environments were scarce

- security controls were largely manual

- uptime was achieved by cautious change, not by safe change

Those constraints have shifted. But many organizations still run on architectural and governance defaults designed for a different era.

The result is predictable:

- innovation slows (lead time grows)

- quality degrades (late integration + big-bang changes)

- reliability suffers (risk is batched, blast radius expands)

- engineers spend more time navigating the system than improving it

If you want a single sentence summary: old patterns don’t just slow delivery - they also create the conditions for outages.

TL;DR

- Retire “analysis as delivery.” Timebox discovery and ship thin vertical slices.

- Treat cloud primitives as primitives, not research projects (e.g., object storage is solved).

- Default to containers + orchestration for most stateless services; use VMs deliberately, not reflexively. [5]

- Replace ticket queues and boards with guardrails + paved roads + policy-as-code. [7][8]

- Measure what matters: lead time, deploy frequency, change failure rate, MTTR. [1][2]

- Modernization works best as an incremental program, not a rewrite (Strangler Fig pattern). [12]

Contents

- Pattern 1: Analysis as a substitute for delivery

- Pattern 2: Reinventing commodity infrastructure

- Pattern 3: VM-first thinking as the default

- Pattern 4: Ticket-driven infrastructure

- Pattern 5: Change Advisory Board for routine changes

- Pattern 6: The shared database empire

- Pattern 7: Central integration as a chokepoint

- Pattern 8: Perma-POCs and innovation theater

- Replace committees with guardrails

- Modernize without a rewrite

- Verification: how you know it’s working

- A practical checklist

- References

Pattern 1: Analysis as a substitute for delivery

What it looks like

A team spends months (sometimes a year) doing “analysis” for a capability that won’t be used until it’s built - often with the intention of eliminating all risk up front.

Common examples:

- multi-tenant “high availability image storage” designed from scratch

- designing bespoke event systems when managed queues exist

- writing 40-page architecture documents before the first running slice exists

Why it existed

When provisioning took weeks and environments were scarce, analysis was a rational risk-reducer.

The hidden tax

- You push real learning to the end (integration failures happen late).

- Decisions get made with imaginary constraints, not measured ones.

- Teams optimize for “approval” rather than “outcome.”

The replacement pattern

Timebox discovery and require a running slice early.

A strong default:

- 1-2 week spike to validate constraints

- a thin vertical slice in production (even behind a flag)

- iterate based on real telemetry and user feedback

Transition step (low drama)

Create an “RFC-lite” template:

- problem statement + constraints

- 1-2 options with tradeoffs

- a plan to measure (latency, cost, reliability)

- a thin-slice milestone date

Pattern 2: Reinventing commodity infrastructure

What it looks like

Teams treat widely-proven primitives as novel:

- object storage

- queues

- identity

- metrics + tracing

- load balancing

A classic symptom: “We need to design HA multi-tenant object storage,” as if durable object storage isn’t already a standard building block.

Why it existed

On-prem and early hosting eras forced you to build a lot yourself.

The hidden tax

- Reinventing primitives becomes a multi-quarter project.

- Reliability becomes your problem (and you will be on call for it).

- The business pays for the same capability twice: once in time, and again in incidents.

The replacement pattern

Default to managed or proven primitives unless you have a documented reason not to.

For example, modern object storage services are explicitly designed for very high durability and availability (provider details vary). [11]

Transition step

Maintain a “Reference Implementations” catalog:

- “How we do object storage”

- “How we do queues”

- “How we do auth”

- “How we do telemetry”

If the default is documented and supported, teams stop re-litigating fundamentals.

Pattern 3: VM-first thinking as the default

What it looks like

Everything runs on VMs because “that’s what we do,” even when the workload is a stateless API, worker, or event consumer.

Why it existed

VMs were the universal unit of deployment for a long time, and they map cleanly to org boundaries (“this server is mine”).

The hidden tax

- drift (snowflake servers)

- slow rollouts

- inconsistent security posture

- wasted compute due to poor bin-packing

- limited standardization across services

The replacement pattern

For many enterprise services, containers orchestrated by Kubernetes are a strong default for stateless workloads. Kubernetes itself describes Deployments as a good fit for managing stateless applications where Pods are interchangeable and replaceable. [5]

This doesn’t mean “Kubernetes for everything,” but it does mean:

- prefer declarative workloads with health checks and rollout controls

- keep VMs for deliberate cases (legacy constraints, special licensing, unique state, or when orchestration adds no value)

Transition step

Start with “Kubernetes-first for new stateless services,” not a migration mandate.

Then build operational guardrails:

- resource requests/limits so services behave predictably under load [6]

- standardized readiness/liveness probes

- standard ingress + auth patterns

Pattern 4: Ticket-driven infrastructure

What it looks like

Need a database? Ticket. Need an environment? Ticket. Need DNS? Ticket. Need a queue? Ticket.

Eventually, the ticketing system becomes the true control plane.

Why it existed

It’s a reasonable response when:

- environments are scarce

- changes are risky

- platform knowledge is specialized

The hidden tax

- queues become normalized (“it takes 3 weeks to get a namespace”)

- teams route around the platform

- reliability doesn’t improve; delivery just slows

The replacement pattern

Self-service via GitOps and platform “paved roads.”

OpenGitOps describes GitOps as a set of standards/best practices for adopting a structured approach to GitOps. [7] The point isn’t a specific tool - it’s the principle: desired state is declarative and auditable.

Transition step

Pick one high-frequency request and eliminate it:

- “create a service with a standard ingress/auth/telemetry”

- “provision a queue”

- “create a dev environment”

Make the paved road the path of least resistance.

Pattern 5: Change Advisory Board for routine changes

What it looks like

Every change - routine or risky - requires synchronous approval.

Why it existed

When changes were large, rare, and manual, centralized review reduced catastrophic surprises.

The hidden tax

- you batch changes (bigger releases are riskier)

- emergency changes bypass process (creating inconsistency)

- “approval” becomes the goal rather than evidence of safety

DORA’s guidance on streamlining change approval emphasizes making the regular change process fast and reliable enough that it can handle emergencies, and reframes how CAB fits into continuous delivery. [3] Continuous delivery literature makes a similar point: smaller, more frequent changes reduce risk and ease remediation. [4]

The replacement pattern

Move to evidence-based change approval:

- automated tests

- policy-as-code checks

- progressive delivery (canaries, phased rollouts)

- real-time telemetry tied to the release

Transition step

Keep CAB, but change its scope:

- focus on high-risk changes and cross-team coordination

- use automation and metrics for routine changes

Pattern 6: The shared database empire

What it looks like

A central database is shared by many services. Teams coordinate schema changes across multiple apps and releases.

Microservices.io describes the “shared database” pattern explicitly: multiple services access a single database directly. [10]

Why it existed

It’s simple at first:

- one place for data

- easy joins

- one backup plan

The hidden tax

- coupling spreads everywhere

- every change becomes cross-team work

- reliability suffers because one DB problem becomes everyone’s problem

- schema evolution becomes political

The replacement pattern

Prefer service-owned data boundaries. Microservices.io’s “database per service” pattern describes keeping a service’s data private and accessible only via its API. [9]

Transition step

You don’t have to “microservices everything.” Start by:

- carving out new tables owned by one service

- introducing an API boundary

- migrating consumers gradually

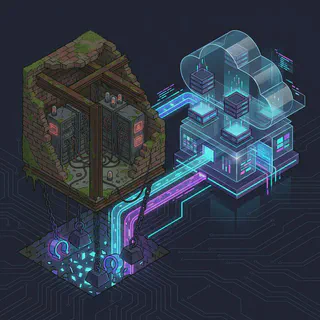

Pattern 7: Central integration as a chokepoint

What it looks like

All integrations must go through a single shared integration layer/team (classic ESB gravity).

Why it existed

Centralizing integration gave consistency when:

- protocols were messy

- tooling was expensive

- teams lacked automation

The hidden tax

- integration lead times explode

- teams stop experimenting

- one backlog becomes everyone’s bottleneck

The replacement pattern

Standardize:

- interfaces (auth, tracing, deployment, contract testing)

- platform guardrails

…not every internal implementation detail.

Transition step

Carve out one “self-service integration” paved road:

- standard service template

- standard auth

- standard telemetry

- contracts + examples

Pattern 8: Perma-POCs and innovation theater

What it looks like

Prototypes exist forever, never becoming production systems.

Especially common with AI initiatives:

- impressive demos

- no production constraints

- no ownership for operability

Why it existed

POCs are a safe way to explore unknowns.

The hidden tax

- teams lose trust (“innovation never ships”)

- production teams inherit half-baked work

- opportunity cost compounds

The replacement pattern

From day one, require:

- an owner

- a production path

- a thin slice in a real environment

- explicit safety requirements (timeouts, budgets, telemetry)

Transition step

Make “POC exit criteria” mandatory:

- what metrics prove value?

- what is the minimum shippable slice?

- what must be true for reliability and security?

Replace committees with guardrails

A recurring theme: humans are expensive control planes.

The modern move is to convert “tribal rules” into:

- templates

- automation

- policy-as-code

- paved paths

Microsoft’s platform engineering work describes “paved paths” within an internal developer platform as recommended paths to production that guide developers through requirements without sacrificing velocity. [8]

Guardrails beat gatekeepers because guardrails are:

- consistent

- fast

- auditable

- scalable

Modernize without a rewrite

Big-bang rewrites are expensive and risky. Incremental modernization is usually the winning move.

The Strangler Fig pattern is a well-known approach: wrap or route traffic so you can replace parts of a legacy system gradually. [12]

Practical approach:

- put a facade in front of the legacy surface

- carve off one slice at a time

- measure outcomes

- keep rollback easy

This isn’t glamorous. It works.

Verification: how you know it’s working

If you want to avoid “modernization theater,” measure.

DORA’s metrics guidance is a solid baseline: deployment frequency, lead time for changes, change failure rate, and time to restore service (MTTR). [1] The 2024 DORA report continues to focus on the organizational capabilities that drive high performance. [2]

A simple evidence loop:

- Pick one value stream (one product or platform slice).

- Baseline the four DORA metrics.

- Remove one friction point (one pattern).

- Re-measure.

If your metrics don’t move, you didn’t remove the real constraint.

A practical checklist

If you’re trying to retire “enterprise debt” safely:

Delivery

- Timebox analysis; require a running slice early.

- Prefer small changes and frequent releases; avoid batching.

Platform

- Provide a paved road for common workflows (service template, auth, telemetry). [8]

- Remove ticket queues for repeatable requests (self-service + GitOps). [7]

Reliability

- Standardize timeouts, retries, budgets, and resource requests/limits. [6]

- Use progressive delivery where risk is high.

Architecture

- Reduce shared DB coupling; establish service-owned boundaries. [9][10]

- Modernize incrementally (Strangler Fig), not via big-bang rewrites. [12]

Governance

- Replace routine approvals with evidence: tests + policy-as-code + telemetry. [3][4]

References

[1] DORA - “DORA’s software delivery performance metrics (guide)”. https://dora.dev/guides/dora-metrics/ [2] DORA - “Accelerate State of DevOps Report 2024”. https://dora.dev/research/2024/dora-report/ [3] DORA - “Streamlining change approval (capability)”. https://dora.dev/capabilities/streamlining-change-approval/ [4] ContinuousDelivery.com - “Continuous Delivery and ITIL: Change Management”. https://continuousdelivery.com/2010/11/continuous-delivery-and-itil-change-management/ [5] Kubernetes docs - “Workloads (Deployments are a good fit for stateless workloads)”. https://kubernetes.io/docs/concepts/workloads/ [6] Kubernetes docs - “Resource Management for Pods and Containers (requests/limits)”. https://kubernetes.io/docs/concepts/configuration/manage-resources-containers/ [7] OpenGitOps - “What is OpenGitOps?” and project background. https://opengitops.dev/ and https://opengitops.dev/about/ [8] Microsoft Engineering Blog - “Building paved paths: the journey to platform engineering”. https://devblogs.microsoft.com/engineering-at-microsoft/building-paved-paths-the-journey-to-platform-engineering/ [9] Microservices.io - “Database per service” pattern. https://microservices.io/patterns/data/database-per-service [10] Microservices.io - “Shared database” pattern. https://microservices.io/patterns/data/shared-database.html [11] AWS documentation - “Data protection in Amazon S3 (durability/availability design goals)”. https://docs.aws.amazon.com/AmazonS3/latest/userguide/DataDurability.html [12] Martin Fowler - “Strangler Fig Application” (legacy modernization pattern). https://martinfowler.com/bliki/StranglerFigApplication.html