Tool Discovery at Scale: Solving the Million Tool Problem

Why this matters

Tool-using agents are powerful because they can do real work: read systems, change systems, orchestrate workflows.

The trap is what I call the Million Tool Problem:

The moment you have “enough tools,” tool selection becomes harder than tool execution.

At small scale, you can stuff tool schemas into the prompt and hope the model chooses correctly. At scale, that approach breaks:

- token budgets explode

- accuracy drops (models confuse similar tools)

- latency rises (bigger prompts, more reasoning)

- safety degrades (wrong tool, wrong args, wrong side effects)

This isn’t hypothetical. Tool-use research exists because selection is hard. Benchmarks like ToolBench and AgentBench exist specifically to evaluate this capability in interactive settings. [3][6]

This post is a production-first design for tool discovery that stays:

- fast (low latency, bounded prompt size)

- safe (tool contracts and policy gates)

- debuggable (you can explain why a tool was chosen)

- maintainable (tool catalogs evolve constantly)

TL;DR

- Tool discovery is an IR problem + a policy problem, not a prompt trick.

- Use a 3-stage selector:

- coarse filter (tags / domain / allowlist)

- retrieval (BM25 + embeddings)

- rerank (LLM or learned ranker)

- Treat tool descriptions as a product:

- consistent naming

- sharp “when to use” / “when not to use”

- examples of correct arguments

- Add tool quality scoring (latency, error rate, drift, safety incidents).

- Build a tight evaluation harness (ToolBench/StableToolBench ideas apply). [3][4]

Contents

- Why “include all tools” fails

- The 3-stage tool selector

- Tool metadata that makes models smarter

- Ranking: BM25 + embeddings + rerank

- Safety: allowlists, “danger gates,” and budgets

- Quality scoring and tool quarantine

- Debuggability: explainable tool selection

- A minimal reference architecture

- A production checklist

- References

Why “include all tools” fails

Token and latency pressure

Even if your tool schemas are “small,” they add up. Once you cross a few dozen tools, you spend more tokens describing tools than describing the task.

Confusability

Tools with similar names or overlapping domains cause selection errors:

search_eventsvslist_eventsvsget_eventcreate_taskvscreate_issuevscreate_ticket

The long tail problem

Most catalogs have a long tail:

- 10 tools get used daily

- 100 tools get used weekly

- 1,000 tools are niche, but critical when needed

This is exactly the kind of situation information retrieval was invented for.

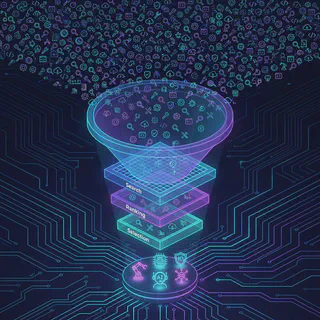

The 3-stage tool selector

Think like a search engine:

Stage 0: Policy filter (mandatory)

Before ranking, enforce policy:

- which tools is this client allowed to call?

- which tools are enabled for this tenant/environment?

- which tools are safe for this context (read-only mode, incident mode, etc.)?

MCP makes tool discovery explicit via listing tools and schemas. That’s an interface you can mediate with policy. [1]

Stage 1: Coarse routing (cheap)

Route into the right “tool neighborhood” using:

- tags (

kubernetes,calendar,email) - domains (“devops”, “productivity”, “security”)

- environment (“prod” vs “dev”)

Goal: reduce the candidate set from 10,000 -> 300.

Stage 2: Retrieval (BM25 + embeddings)

Run a hybrid search over:

- tool name

- tool description

- parameter names

- example calls

- “when not to use” hints

Hybrid search is pragmatic:

- lexical retrieval (BM25-style) is great for exact matches and acronyms [9]

- embeddings are great for semantic similarity [7]

Goal: 300 -> 30.

Stage 3: Rerank (expensive, accurate)

Rerank the top-K tools using:

- an LLM judge (cheap if K is small)

- or a learned ranker

- or deterministic rules + a smaller LLM tie-breaker

Goal: 30 -> 5.

Then the agent sees a small, high-quality tool set.

Tool metadata that makes models smarter

If you want better tool selection, stop treating tool schemas as “just types.” Add metadata that improves discrimination.

Tool card fields (recommended)

- Name: stable, verb-first

- Purpose: one sentence

- When to use: 2-4 bullets

- When NOT to use: 2-4 bullets (this is underrated)

- Side effects: none / read-only / creates / updates / deletes

- Required arguments: and why they’re required

- Examples: 2-3 example invocations with realistic args

- Error modes: rate limit, auth, not found, validation

This reduces tool confusion dramatically because it gives the model differentiating features.

Ranking: BM25 + embeddings + rerank

Lexical retrieval (BM25)

BM25 and probabilistic retrieval approaches are foundational in search. [9]

Practical benefit: it handles queries like:

- “S3”

- “JWT”

- “PodDisruptionBudget”

- “Cron” …where embeddings can be inconsistent.

Embeddings

Sentence embeddings (like SBERT-style approaches) are designed to enable efficient semantic similarity search. [7]

Practical benefit: it handles intent queries like:

- “delete all tasks due tomorrow”

- “find calendar conflicts next week”

- “check if deployment is stuck”

Approximate nearest neighbor indexing

At scale, you’ll want ANN indexing (FAISS is a well-known library in this space). [8]

Rerank

This is where you incorporate:

- tool quality score

- tenant policy

- “danger tool” gating

- recent tool drift

Reranking is also where you can enforce “don’t pick write tools unless necessary.”

Safety: allowlists, “danger gates,” and budgets

Tool discovery is not neutral. It’s an authorization problem.

Your selector should be policy-aware:

- Read-only mode: only surface read tools

- No-delete mode: deletes never appear

- Prod incident mode: allow observation tools, restrict mutation

- Human approval mode: show write tools, but require confirmation

Also: build budgets into selection. If a tool is expensive (slow, rate-limited, high blast radius), rank it lower unless strongly justified.

For tool-using agents, OWASP highlights prompt injection and excessive agency as key risks - exactly the failure modes you get when tools are over-exposed without gates. [10]

Quality scoring and tool quarantine

You need a tool quality score because tools drift:

- upstream APIs change

- auth breaks

- quotas shift

- tool server regressions happen

Track per tool:

- p50 / p95 latency

- error rate

- timeout rate

- “invalid argument” rate (often a selection problem)

- “unsafe attempt” rate (policy violations)

Then take action:

- quarantine tools with regression spikes

- degrade to read-only tools during outages

- route to backups (alternate implementations)

Debuggability: explainable tool selection

If you can’t answer “why did the agent pick that tool?”, you won’t be able to operate the system.

Log (or attach to traces) the selection evidence:

- query text

- candidate tools (top 30)

- retrieval scores

- rerank scores

- policy filters applied

- final selected tools and why

This also becomes training data later.

A minimal reference architecture

-------------------------------

- Agent runtime (planner) -

-------------------------------

-

v

-------------------------------

- Tool Selector Service -

- - policy filter -

- - hybrid retrieval -

- - rerank -

- - tool quality weighting -

-------------------------------

- returns top-K tools + schemas

v

-------------------------------

- Agent execution -

- - calls tools via MCP -

-------------------------------

Where MCP fits: MCP provides a standardized way for clients to discover tools and invoke them. [1]

The selector doesn’t replace MCP. It makes MCP usable at scale.

A production checklist

Tool catalog hygiene

- Stable naming conventions.

- “When NOT to use” bullets exist.

- Examples exist for the top tools.

- Tool side effects are classified.

Selection pipeline

- Mandatory policy filter before ranking.

- Hybrid retrieval (lexical + embeddings). [7][9]

- Rerank top-K with quality + policy.

- Candidate set bounded (K is small).

Safety

- Dangerous tools are gated and not surfaced by default.

- Budget-aware ranking exists.

- OWASP LLM risks considered in tool exposure strategy. [10]

Operability

- Selection decisions are explainable (log evidence).

- Tool quality scoring exists and drives quarantine.

- Selection regressions are covered by evals (next article).

References

[1] Model Context Protocol (MCP) - Specification (Protocol Revision 2025-11-25): https://modelcontextprotocol.io/specification/2025-11-25 [2] MCP - Transports (including stdio and Streamable HTTP): https://modelcontextprotocol.io/specification/2025-03-26/basic/transports [3] ToolLLM / ToolBench (tool-use dataset + evaluation): https://arxiv.org/abs/2307.16789 [4] StableToolBench (stable tool-use benchmarking): https://arxiv.org/abs/2403.07714 [5] tau-bench (tool-agent-user interaction benchmark): https://arxiv.org/abs/2406.12045 [6] AgentBench (evaluating LLMs as agents): https://arxiv.org/abs/2308.03688 [7] Sentence-BERT (efficient semantic similarity search via embeddings): https://arxiv.org/abs/1908.10084 [8] FAISS / Billion-scale similarity search with GPUs: https://arxiv.org/abs/1702.08734 and https://github.com/facebookresearch/faiss [9] Robertson (BM25 and probabilistic relevance framework): https://dl.acm.org/doi/abs/10.1561/1500000019 [10] OWASP - Top 10 for Large Language Model Applications: https://owasp.org/www-project-top-10-for-large-language-model-applications/